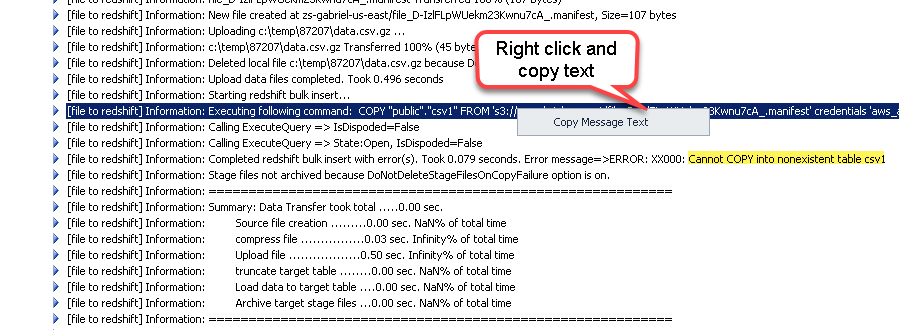

This issue is only affecting the COPY command. The COPY command appends the new input data to any existing rows in the target table. You can take maximum advantage of parallel processing by splitting your data into multiple files, in cases where the files are compressed. Network: Connected to OpenVPN, via SSH Port tunneling. If you want all your rows added in a single COPY command to have the same value of jobid, then you may COPY data into staging table, then add jobid column into that table, then insert all data from the staging table into final table like: CREATE TABLE destinationstaging (LIKE destination) ALTER TABLE destinationstaging DROP COLUMN jobid. The COPY command reads and loads data in parallel from a file or multiple files in an S3 bucket. Redshift: Cluster TypeThe cluster's type: Multi NodeĬlient Environment: Aginity Workbench for Redshift This also assumes that all columns having the quote character in. So a valid CSV can have multi-line rows, and the correct way to import it in Redshift is to specify the CSV format option. For example: 'aaa','b CRLF bb','ccc' CRLF zzz,yyy,xxx. The file is delimited by Pipe, but there are value that contains Pipe and other Special characters, but if value has Pipe, it is enclosed by double q. Fields containing line breaks (CRLF), double quotes, and commas should be enclosed in double-quotes. The 's3:// copyfroms3manifestfile' argument must explicitly reference a single filefor example, 's3://mybucket/manifest.txt'. I am trying to load a file from S3 to Redshift. In summary, use a different client like SQL Workbench and run the COPY command from there. 's3://copyfroms3manifestfile' Specifies the Amazon S3 object key for a manifest file that lists the data files to be loaded. You can also unload using SSE-KMS or client-side encryption with a customer managed. The UNLOAD command automatically encrypts files using SSE-S3.

I know this is an old thread, but for anyone coming here because of the same issue, I've realised that, at least for my case, the problem was the Aginity client so, it's not related with Redshift or its Workload Manager, but only with such third party client called Aginity. For more information about Amazon S3 encryption, see Protecting Data Using Server-Side Encryption and Protecting Data Using Client-Side Encryption in the Amazon Simple Storage Service User Guide.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed